Why AI Prompt Logging Is a Must.

No logs. No scope. No renewal. AI is the fastest unlogged channel in every client stack you manage, and the first question after a leak never changes.

AI is the fastest unlogged channel in every client stack you manage. When a leak hits the news, the first call you take is always the same: “did it happen here, when, and by whom?” Without prompt logs, the best answer an MSP can offer is “we don’t know.” That is the sentence that ends contracts.

The MSP Blind Spot

You’re responsible for AI risk you can’t see.

Unknown adoption.

Your client says “we don’t really use AI.” Dashboards say 40% of their staff do, every day. Guess which number appears in the breach disclosure.

Shadow app sprawl.

ChatGPT, Claude, Copilot, Gemini, and a long tail of niche tools live inside the browser. Your existing DLP sees encrypted traffic, not content.

No forensic floor.

When something leaks, investigation starts at zero. Hours get billed, nothing chargeable gets produced, and the client loses confidence in the process.

Missing QBR story.

You cannot report on an AI risk posture you do not measure. The competitor who walks in with an adoption dashboard owns the next conversation.

What Breaks Without Logs

Four scenarios that start with “we don’t know.”

The 2am call.

“Hacker News says ChatGPT leaked data from our sector. Are we affected?” Without logs, you offer “we don’t know.” The client calls a lawyer. You call the CFO.

The departing employee.

“We think our outgoing engineer pasted the pricing model into Claude.” Without logs, you are guessing. With logs, the question is closed before lunch.

The ticket spike.

You banned ChatGPT to be safe. Users now route through personal devices and phones. Help desk calls triple. Shadow IT won. Logging lets you enable, not ban.

The AI line item on the RFP.

“How do you track, log, and retain client AI usage?” The MSP who answers “at the prompt level” wins the bid. Everyone else explains a gap.

Sources

Microsoft & LinkedIn Work Trend Index 2024 · Cyberhaven Generative AI Data Study 2024 · Netskope Cloud & Threat Report 2024 · Gartner AI Governance Survey 2024

The Fix

DefensX Prompt Logging, a baseline for every AI-enabled client stack.

DefensX captures every prompt, response, and data-flow event across ChatGPT, Claude, Copilot, and Gemini, from inside the browser. Users stay productive. You get a baseline. Incidents close in seconds, not sprints.

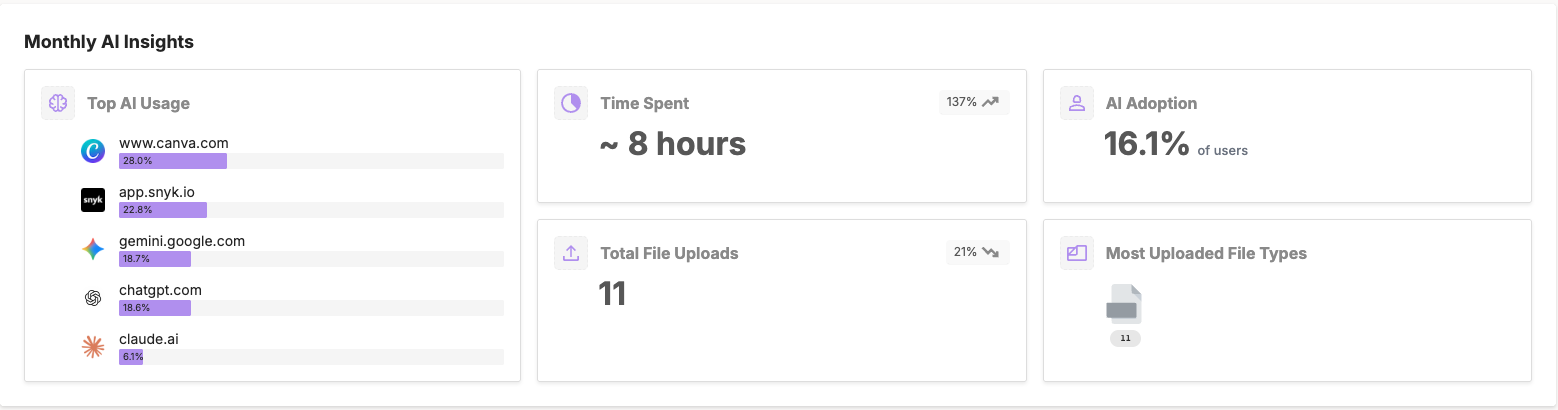

Client says “we don’t really use AI.” The dashboard says otherwise.

Without Prompt Logging

You take the client at their word, move on, and the exposure sits inside 40% of their daily workflows. Six months later, their IP surfaces in a public model response, and the blame lands on the MSP who never looked.

With DefensX

The AI Insights Dashboard surfaces adoption, active tools, and time spent on day one. You walk into the next QBR with a measured policy recommendation, a risk score, and an upsell path instead of a question.

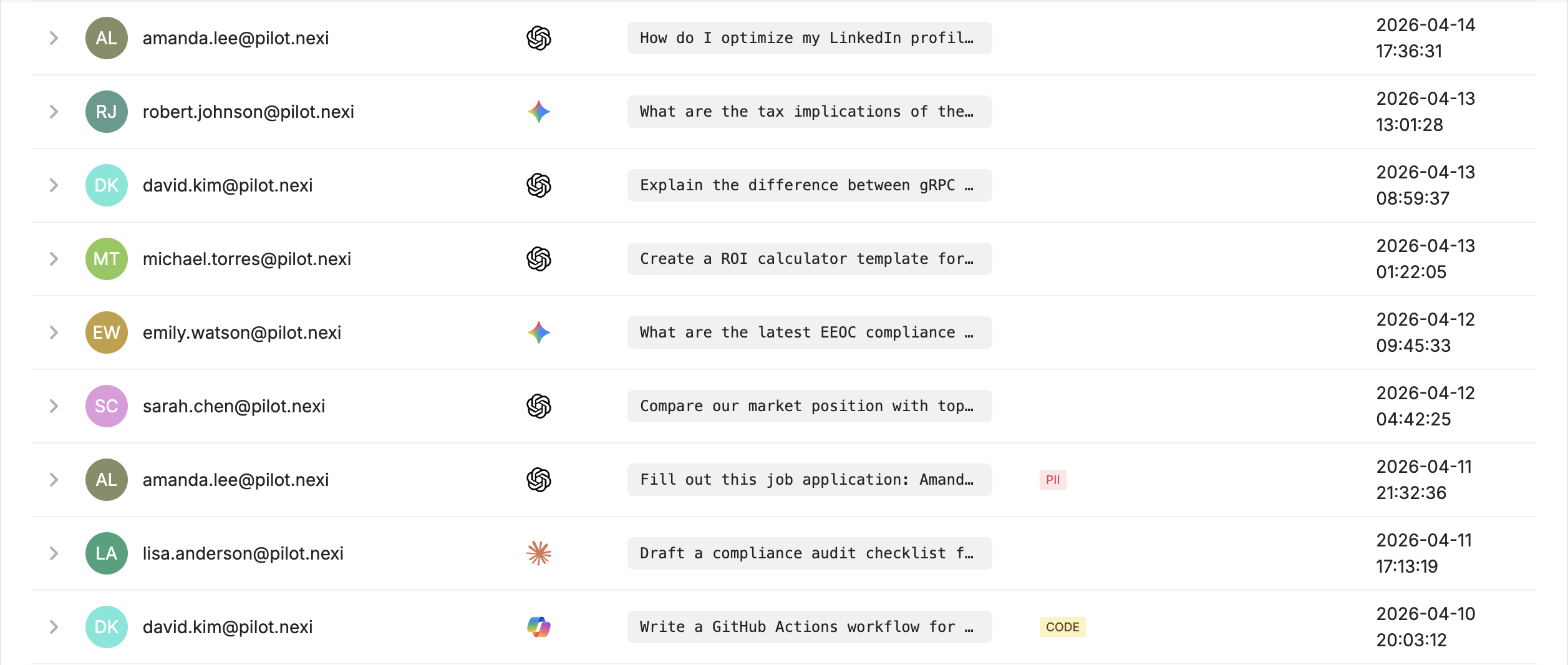

A departing employee is suspected of pasting IP into Claude.

Without Prompt Logging

Investigation starts at zero. You bill hours of archaeology across endpoints, DLP alerts, and HR notes, and still land on “probably.” The client loses confidence in the process even when the answer is favorable.

With DefensX

Prompt Logging exports the user’s full AI interaction history in one query. You deliver a scoped, timestamped answer in a morning and close the ticket by lunch. The client sees an MSP that runs toward the problem.

Client rolls Copilot out company-wide. Who owns the risk?

Without Prompt Logging

Per-user configuration across M365, inconsistent policy, and no record of what’s prompted. Risk defaults to the MSP by relationship. When data trains the public model, the 2am call comes to you, not Microsoft.

With DefensX

Tenant-level enforcement locks “Improve the Model” off across the Microsoft ecosystem in one setting. Prompts are logged with user and matter context. Copilot stays, risk drops, renewal holds.

DefensX Features At Work

Five capabilities, one billable baseline.

Turns “we don’t use AI” into a measured adoption report, per-client, on day one. Instant QBR material.

Every prompt captured with user, timestamp, and device. Immutable, searchable, exportable. Incident scope becomes arithmetic.

PII, PCI, and IP never leave the browser unmasked. Productivity preserved, tickets not triggered, enablement not ban.

Native ChatGPT and Claude apps redirect to the browser where policy lives. Closes the one governance gap most MSPs miss.

One setting locks “Improve the Model” off across the M365 tenant. Covers fat-client and browser in a single pass.

The MSP Operating Benefit

Not just security. Hours back and contracts kept.

Incident scope in seconds.

When a suspected leak surfaces, you open a query, not a case file. Investigation becomes arithmetic, not archaeology. The client sees an MSP that answers fast.

Per-tenant, one-to-many.

Flip logging on across 50 clients without per-user configuration. Policy inherits by group, rollout takes a morning, and the scaling cost disappears.

A billable governance tier.

“AI adoption report,” “AI risk score,” and “AI incident retainer” become line items on the invoice. Logging is the measurement that monetizes them.

Log every prompt. Keep every client.

One browser-native platform. AI visibility, scope, and a billable governance tier, purpose-built for MSPs.